Awni Hannun

@awnihannun

Machine Learning Research @Apple

ID:245262377

https://awnihannun.com/ 31-01-2011 08:05:27

1,7K Tweets

16,5K Followers

247 Following

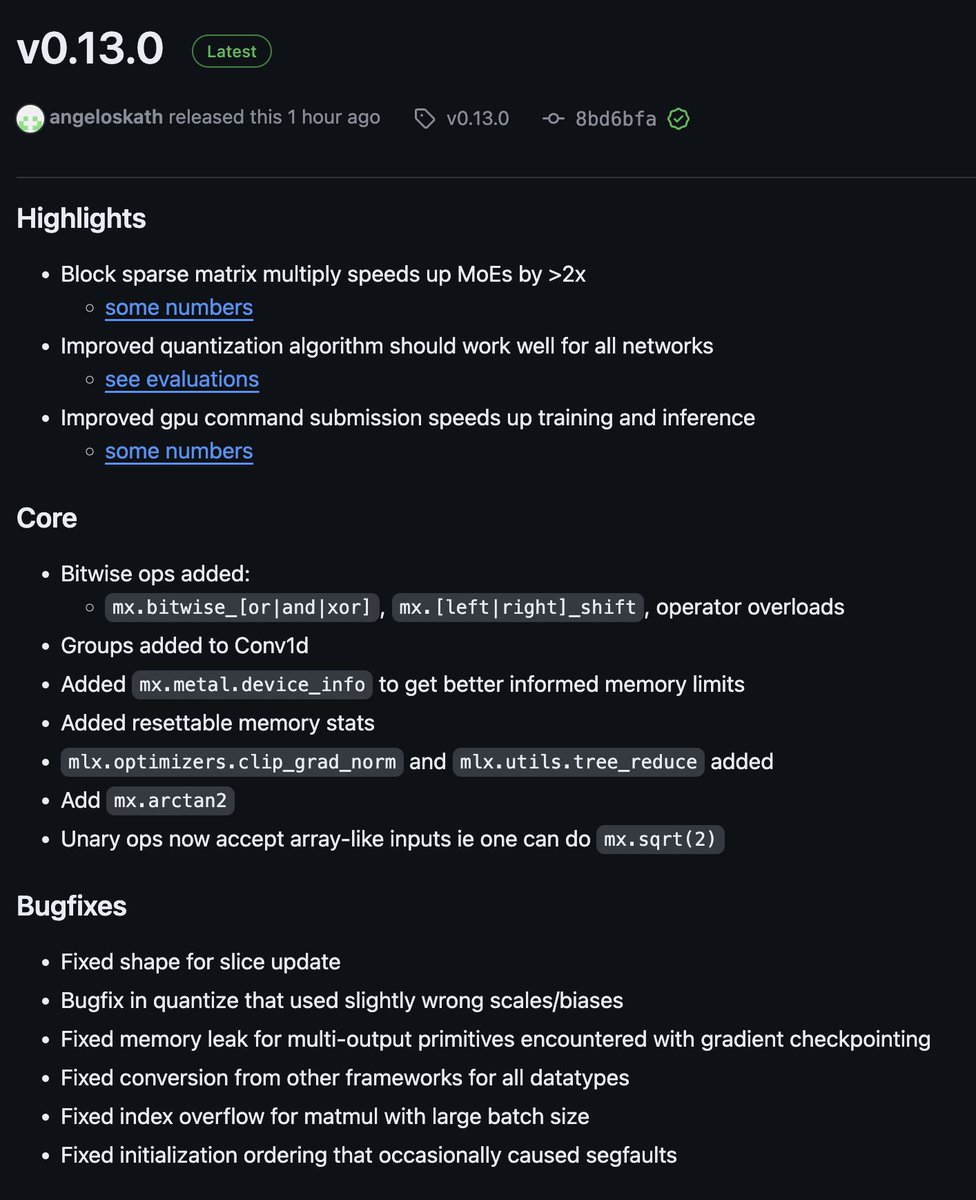

Fine-tuning the latest Google Gemma model locally using MLX

Just a reminder of the power and hackability of Apple MLX 💪

Great job Morning Coder 🔥

Eric Hartford MLX

2bit: huggingface.co/mlx-community/…

4bit: huggingface.co/mlx-community/…

8bit: huggingface.co/mlx-community/…

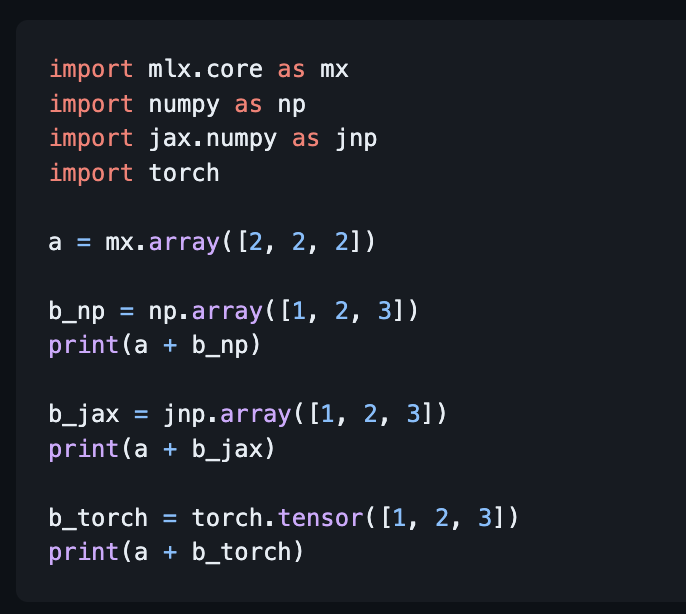

Cool feature of the latest MLX:

Most MLX ops can take input arrays from your (second 😉) favorite ML framework.

All thanks to the multi-framework array support in Wenzel Jakob's Nanobind.

✅︎ Tests passing for the Microsoft BitNet 1.58Bit LLM MLX implementation on Apple Silicon

This model from Microsoft is well suited to edge devices (e.g. iPad/iPhone/Macbook) since it uses 7x less memory and 71x less energy than LLaMA

h/t Awni Hannun Ky⨋ Gom⨋z (U/ACC) (HIRING) Prince Canuma

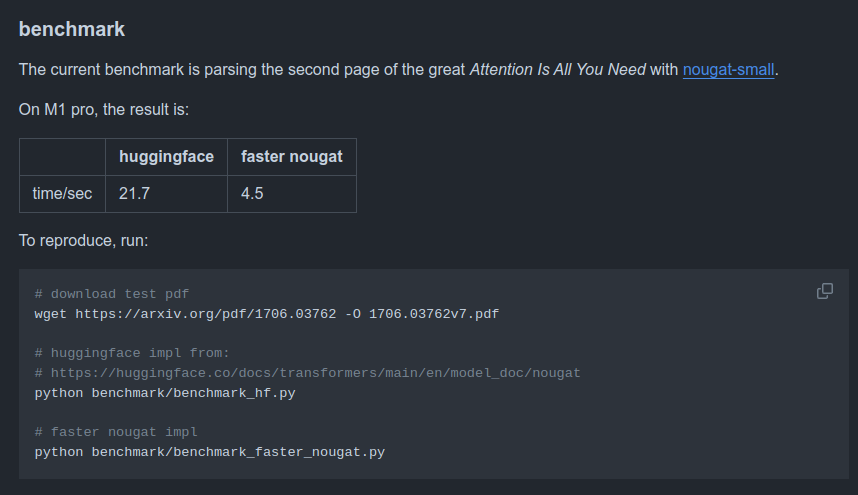

Cool new repo: faster-nougat

An MLX implementation of AI at Meta's Nougat for OCR-based RAG.

Code: github.com/zhuzilin/faste…

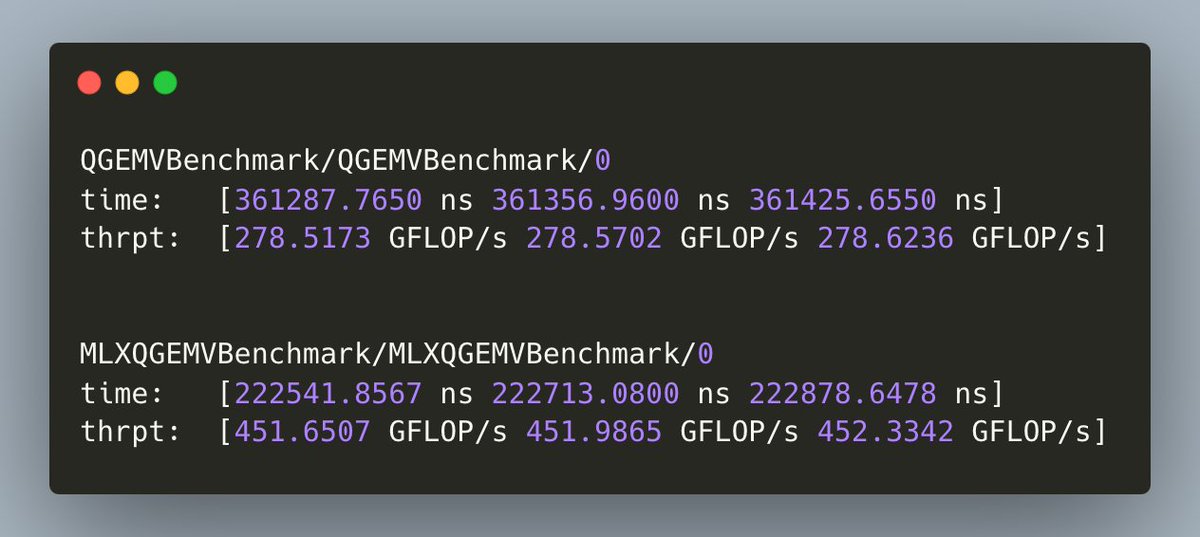

5x faster on an M1 pro than the baseline, no magic, just MLX:

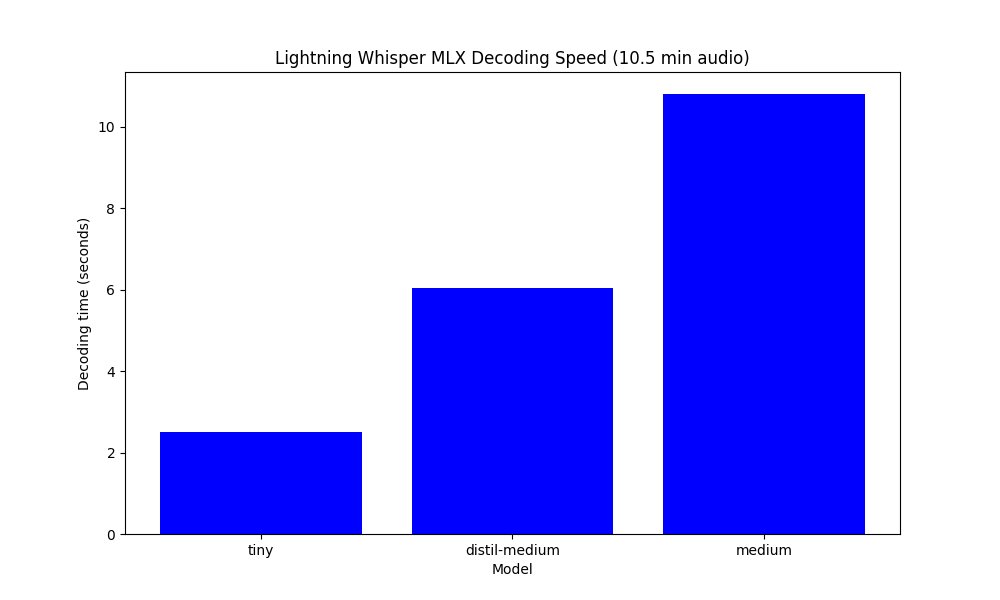

MLX: Today I had to do some speech-to-text from English and Italian, I tried lightning-whisper-mlx and...

It is SUPER FAST! 🔥

M3 Max 40GPU: 10.5 min audio in 6 seconds! 👀

Amazing work Mustafa Aljadery 🔝

And thanks as always to Awni Hannun for MLX powering so many great

`mlx-embedding-models` update!

- now supports Nomic-BERT models thanks to a contribution from Zach Nussbaum!

- fixed a bug with mean-pooling

- has supported SPLADE for a while, i just forgot to tweet about it

pip install mlx-embedding-models

pypi.org/project/mlx-em…