Machine Learning at Georgia Tech

@mlatgt

The Machine Learning Center at Georgia Tech (ML@GT) is an interdisciplinary research center that trains the next generation of #machinelearning & #AI pioneers.

ID:783422205195583488

http://www.ml.gatech.edu 04-10-2016 21:43:03

2,5K Tweets

6,6K Followers

444 Following

Follow People

CSE faculty and students were among the swarm of Georgia Tech researchers presenting last week at #NeurIPS2023 ! 🐝

Check out this work on table structure recognition from Anthony Peng and the Polo Data Club!

shengyun-peng.github.io/tsr-convstem

Georgia Tech Computing Machine Learning at Georgia Tech Georgia Tech Research

‘We Can Achieve More When We Work Together' : Graduating TA Adavya Bhutani Highlights Value of Human Interaction

👆This is a perfect sentiment today for the Graduating Class of 2023. Congrats on this milestone in your life journeys! #WeCanDoThat #IGotOut

cc.gatech.edu/news/we-can-ac…

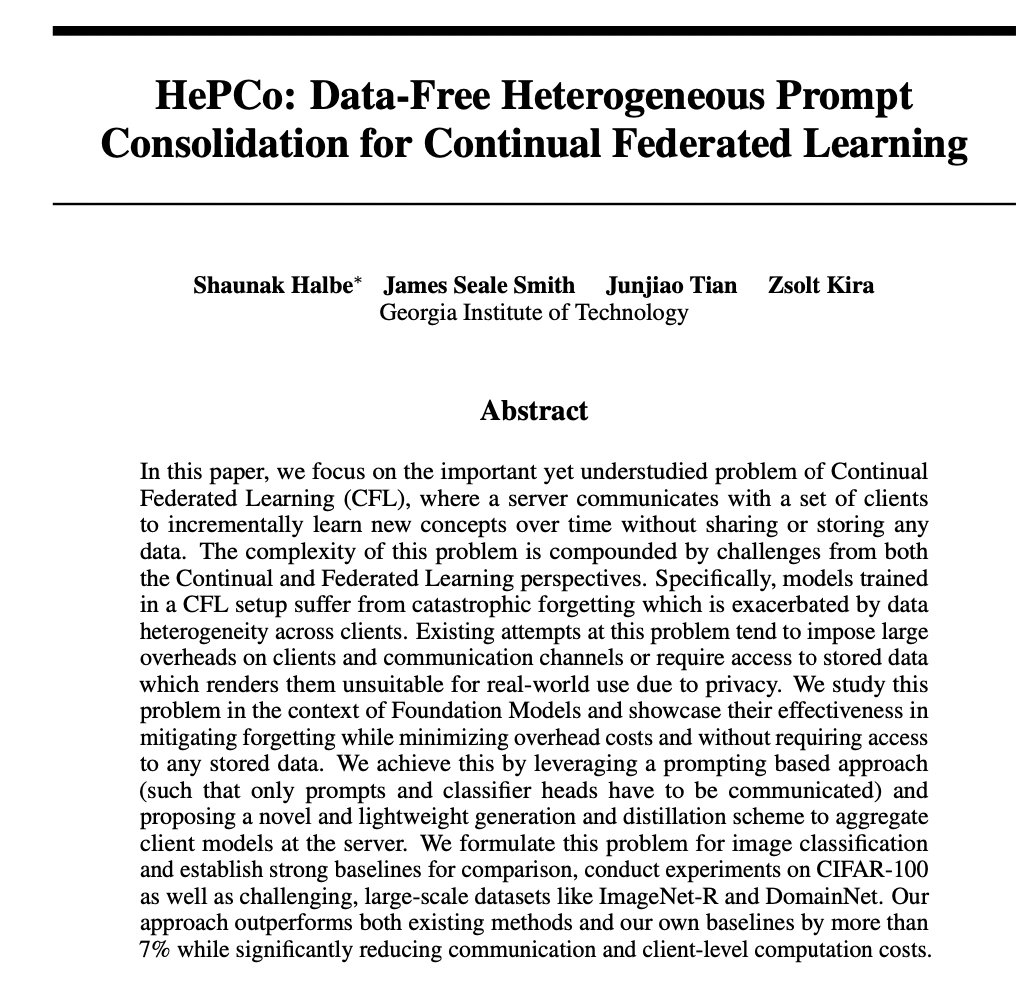

📢I'll be presenting our work 'HePCo' at the #NeurIPS23 R0FoMo workshop tmrw and as an oral talk at the FL@FM workshop on 16th!

HePCo is a lightweight adapter learning and aggregation scheme for fine-tuning foundation models under continual, heterogeneous data distributions.🧵

Had a lotta fun attending NeurIPS Conference 2023 & presenting my paper to an amazing community! So many interesting interactions w/ a lotta talented ppl!!

Thx to Matthew Gombolay for helping this happen! The work was done at the CORE Robotics Lab in Robotics@GT

Georgia Tech Computing Georgia Tech School of Interactive Computing

Bo Dai's group is presenting 3⃣ papers at #NeurIPS2023 ! Among those, one was selected as an oral presentation and another a spotlight! Great work, Bo! 🥳

You can read up on all THREE papers at Georgia Tech Computing's handy microsite ⬇️ covering NeurIPS Conference

sites.gatech.edu/research/neuri…

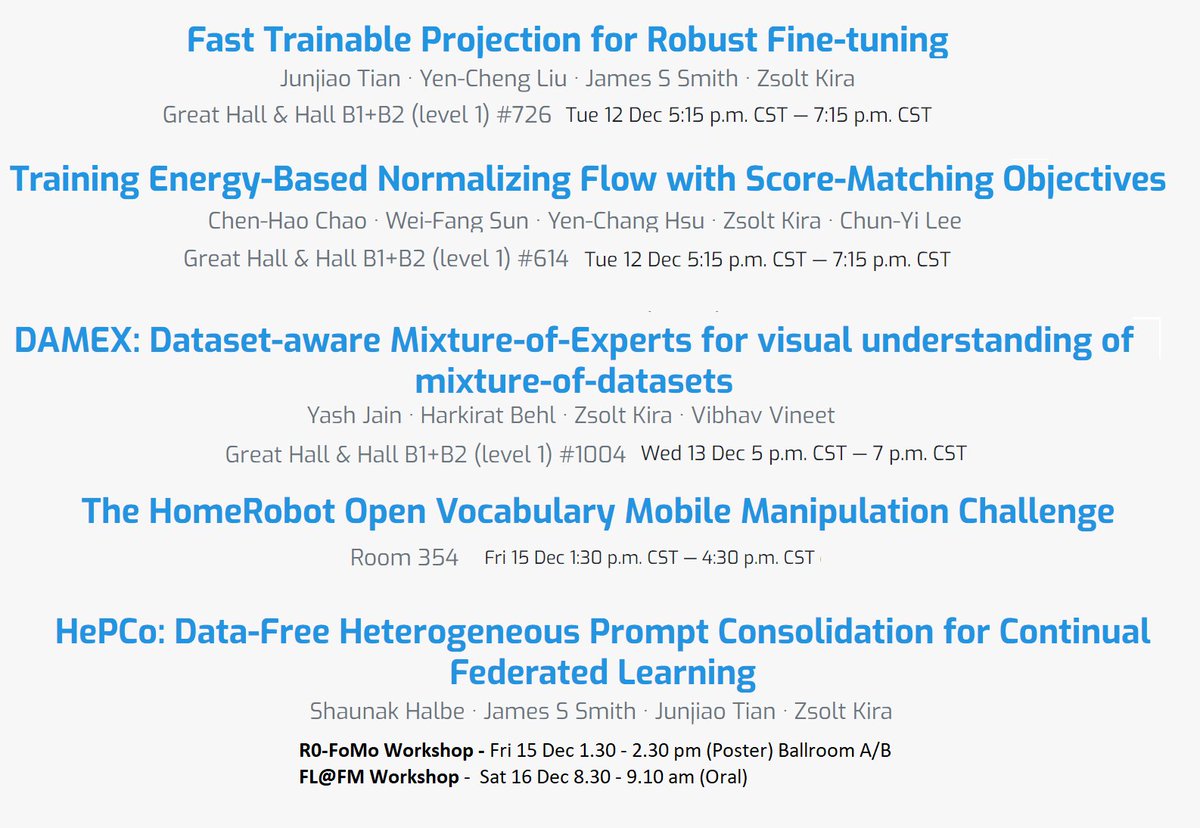

If you are attending NeurIPS Conference check out all of our papers and challenge! Robust fine-tuning, energy based models for generation, MoEs for object detection, open-vocabulary mobile manipulation, and continual federated learning!

First two are today 5:15pm CT!

Georgia Tech School of Interactive Computing Machine Learning at Georgia Tech

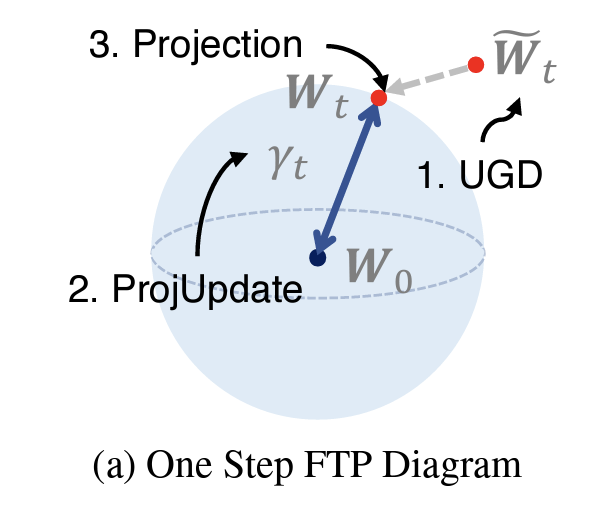

I will present our new work, Fast Trainable Projection (FTP), on robust fine-tuning at #NeurIPS2023 tomorrow (#726).

FTP is an optimizer to fine-tune a pre-trained model while automatically regularizing the deviation from the pre-trained weights to preserve generalization.

Ph.D. candidate Muhammed Fatih Balin and Prof. Ümit Çatalyürek are at #NeurIPS2023 presenting their new sampling algorithm called LaborSampler!

Check out the thread for more on their work, and stay tuned here for continued coverage of CSE at NeurIPS Conference!

Anqi Wu is presenting two papers this week at #NeurIPS2023 , including one that was selected as a spotlight!

Both abstracts and links can be found at Georgia Tech's microsite for the conference! Check it out: sites.gatech.edu/research/neuri…

This Friday at #NeurIPS2023 , Srijan Kumar gives an invited talk at the workshop on Robustness of Few-shot and Zero-shot Learning in Foundation Models!

🧵 below is a preview of Kumar's work and link is here of Georgia Tech research at NeurIPS Conference:

sites.gatech.edu/research/neuri…

Am at #NeurIPS2023 -PM or catch me! Looking to hire PhD/postdocs in Fall 24. Areas:

- Spatio-temporal ML: UQ, interpretability, zero/few shot and causal learning

- AI for health: decision making, multimodal data, AI for simulations, graph models

Georgia Tech Computing Machine Learning at Georgia Tech GaTech CSE

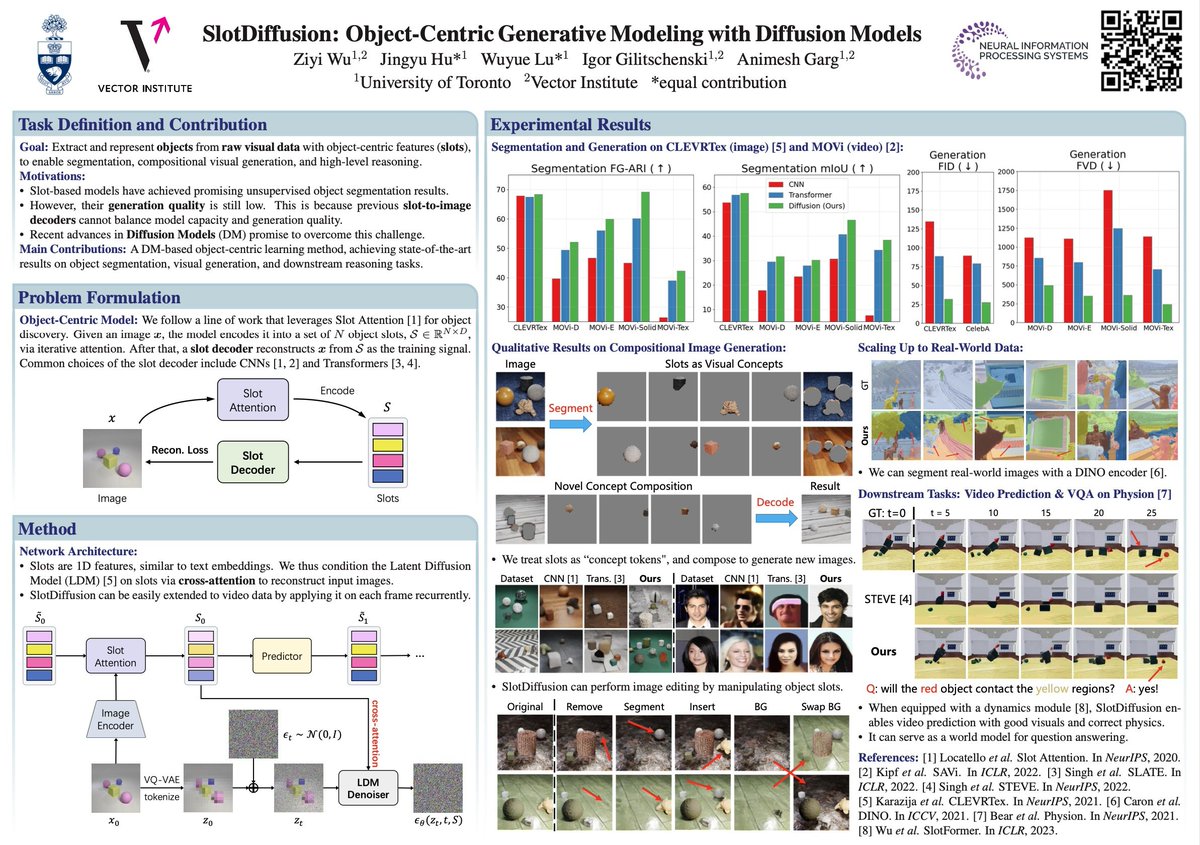

Come talk to us about SlotDiffusion - an object-centric Latent Diffusion Model (LDM) designed for both image and video data

🗓️ Wed, Dec 13, 10:45

📌 Poster #611 Hall B1+B2 (level 1)

The students couldn't be here but the advisors (Igor Gilitschenski) are equally fun to chat with!

See you at #NeurIPS2023 ! I'll be presenting our collaboration with Google DeepMind at the first poster session Tuesday 10:45am-12:45pm in the main hall. Happy to chat about deep learning theory, generalization and robustness, and the best gumbo in town! arxiv.org/abs/2309.08534