🎉 We just released code, data and model for our #SIGGRAPH2023 work 'CT2Hair'!! CT2Hair achieves fully automatic reconstruction of production-ready hair strands from computed tomography (CT) scans!!

Code&Data: github.com/facebookresear…

Project: yuefanshen.net/CTHair

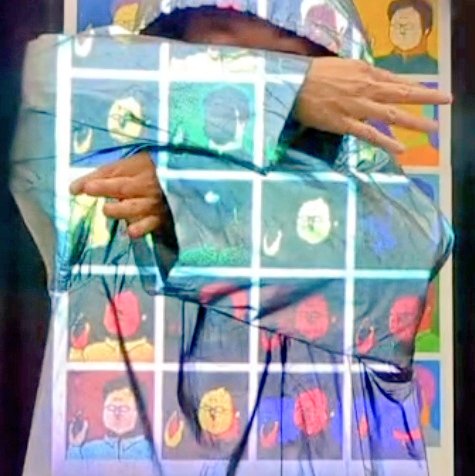

Metaの新作HMDデモ、人生で一番くっきり文字が読めてすごかった

見てる場所に合わせて画面が前後に物理的に動いて焦点距離が変わるバリフォーカル機能で近くの物体が圧倒的に綺麗に見える

今までVRの視界が見にくかったのは解像度不足ではなくバリフォーカルがないせいだったのか……!

#SIGGRAPH2023

#SIGGRAPH2023 に採択された、CTスキャンから高精度の3D髪モデルを復元する「CT2Hair」のコードがGithubで公開されました!!!

CTデータと生成結果もダウンロードできるのでぜひ遊んでみてください!

github.com/facebookresear…

Hear about Materialistic on Thursday at 2 PM in the Material Acquisition session in Room 502 AB! #SIGGRAPH2023

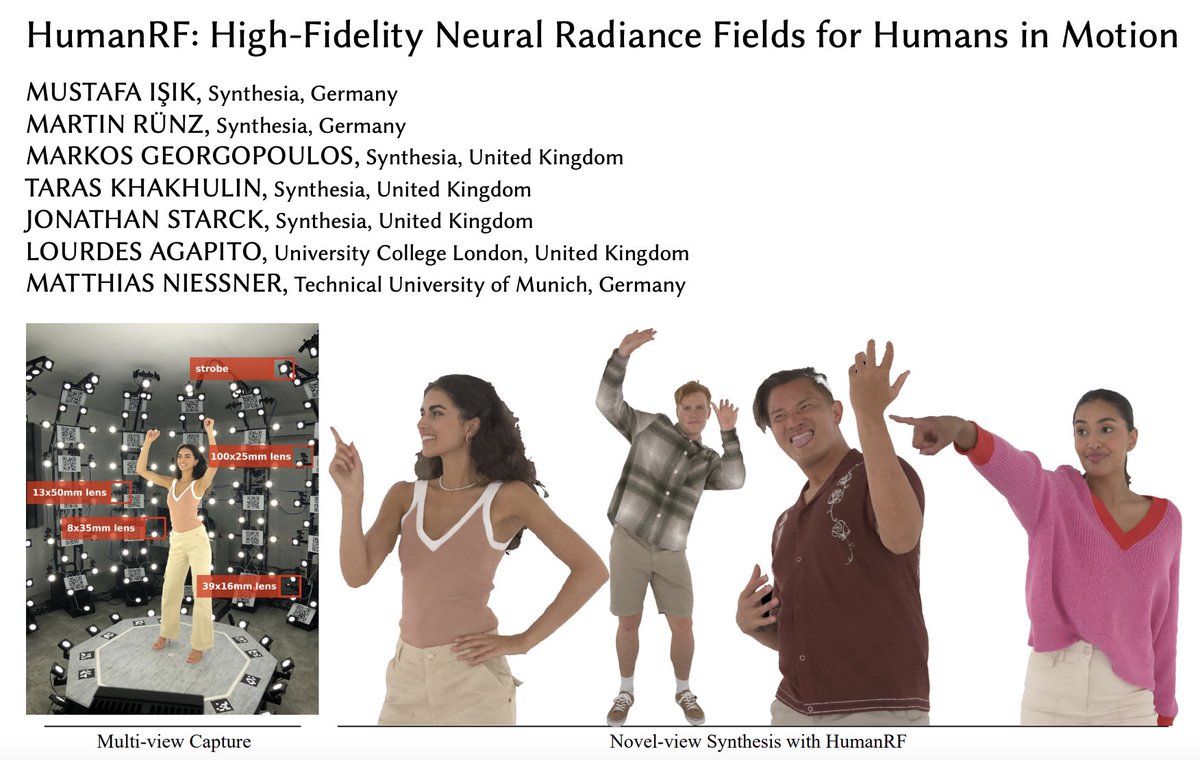

I’ll be presenting our work HumanRF today at #SIGGRAPH2023 . It’ll take place in Petree Hall C on “NeRF for Avatars” session at 15:45. Looking forward to having insightful discussions regarding HumanRF and NeRFs in general. See you there!

Train your avatars to interact with 3D scenes. We use adversarial imitation learning and reinforcement learning to train physically-simulated characters that perform scene interaction tasks in a natural and life-like manner. Today at #SIGGRAPH2023 .

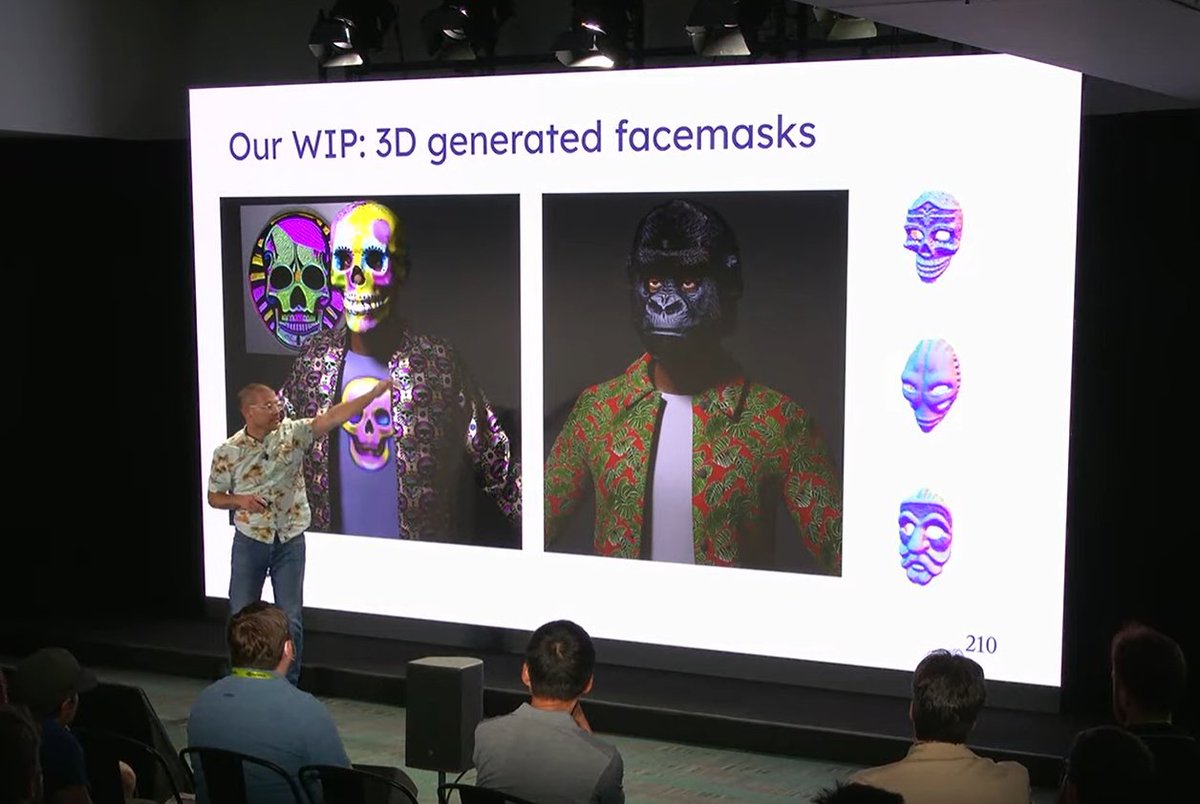

If you missed our presentation or weren't at #SIGGRAPH2023 - our talk on 3D asset generation is on YouTube!

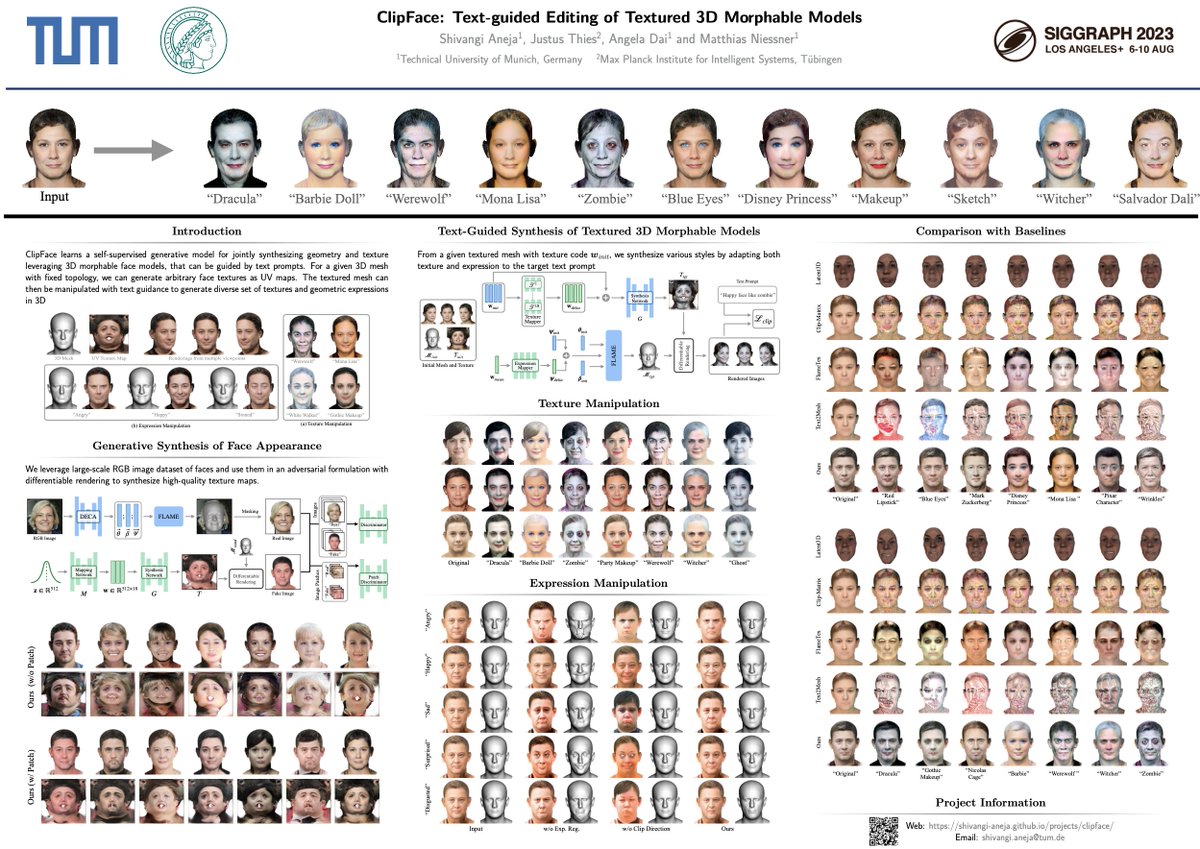

Check out our talk for our #SIGGRAPH2023 technical paper ClipFace tomorrow morning (Thursday) at 11:36 AM - 11:46 am in Petree Hall D. Come by also to chat about digital humans during the poster session afterwards.

We're not only hardware-focused. Check out how our talented team is empowering developers with the next generation of mobile graphics at #SIGGRAPH2023

Learn more: oppo.com/en/newsroom/pr…