Qi Sun

@qisun0

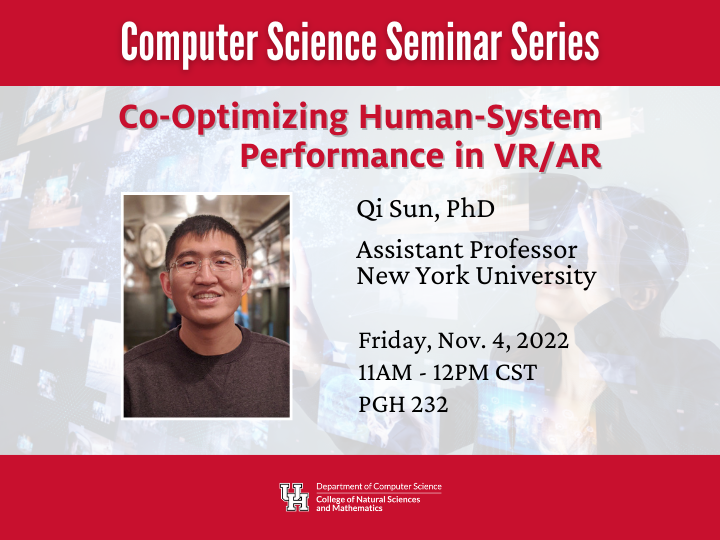

Assistant Professor at New York University directing https://t.co/Tm0Ri6oAjk | VR/AR researcher

ID:1239282268692082688

http://qisun.me/ 15-03-2020 20:08:23

90 Tweets

602 Followers

351 Following

I am looking for PhDs, Postdocs and Interns to explore exciting research in Optics and Generative AI in my new UNC-Chapel Hill lab.

Apply now: cs.unc.edu/graduate/gradu…

Please share and spread the news :)

Join our team at Universidad de Zaragoza for PhD and Postdoc positions within the 'IMMERSENSE' project. 🌐Help us explore and computationally model Multimodal Perception in Virtual Reality. 🚀More details in the attached image! 📚 #PhD #Postdoc #VirtualReality #ResearchOpportunity

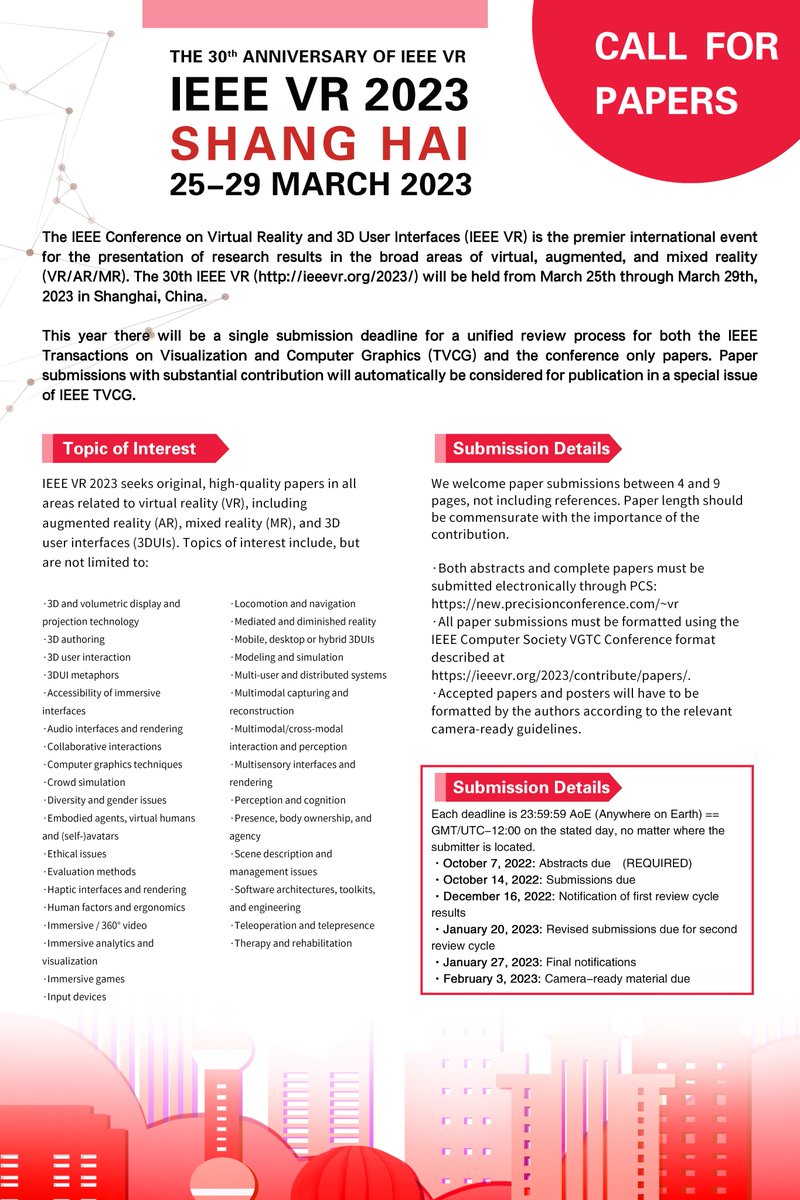

If you are attending #SIGGRAPH2023 , don't miss our e-tech exhibition on imperceptible #VR / #AR display power saving👉s2023.siggraph.org/presentation/?… . Also, come to our paper talks for ergonomic neck saver👉s2023.siggraph.org/presentation/?… and ISMAR Best Paper Fov- #NeRF 👉s2023.siggraph.org/presentation/?…!

My lab is looking for a postdoc in the area of context-aware augmented reality: maria.gorlatova.com/2023/07/postdo…. Great opportunity for folk with expertise in ML in mobile systems' or pervasive sensing contexts (e.g., human activity recognition, edge video analytics, ML for QoS or QoE).

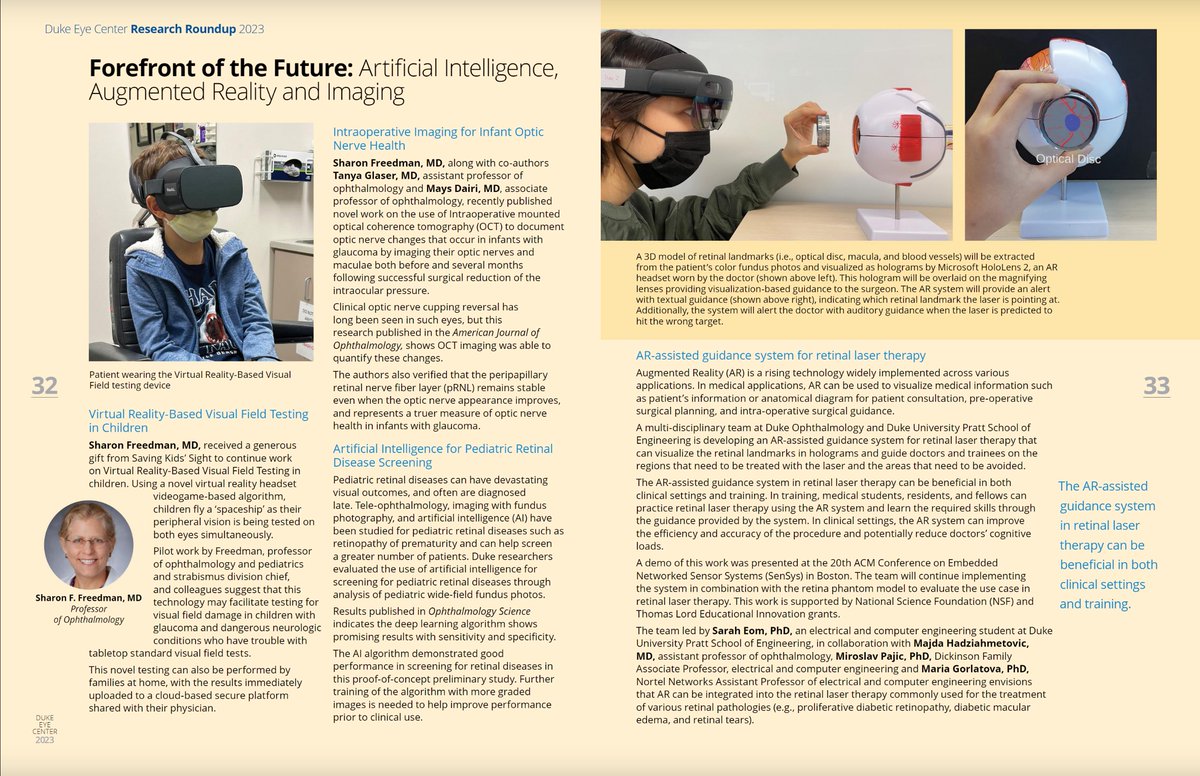

Our work on augmented reality for retinal laser therapy is featured in the 2023 Duke Eye Center VISION Magazine! Joint project with Miroslav Pajic and Majda Hadziahmetovic, led by my PhD student Sarah Eom. #AugmentedReality

📢Join us at SAP, if you will attend #SIGGRAPH2023 in LA and want to spend the weekend before learning exciting perception research across VR/AR/AI. Beyond the incredible papers, we lined up renowned keynote speakers from the VR industry and neuroscience! sap.acm.org/2023/pages/pro…

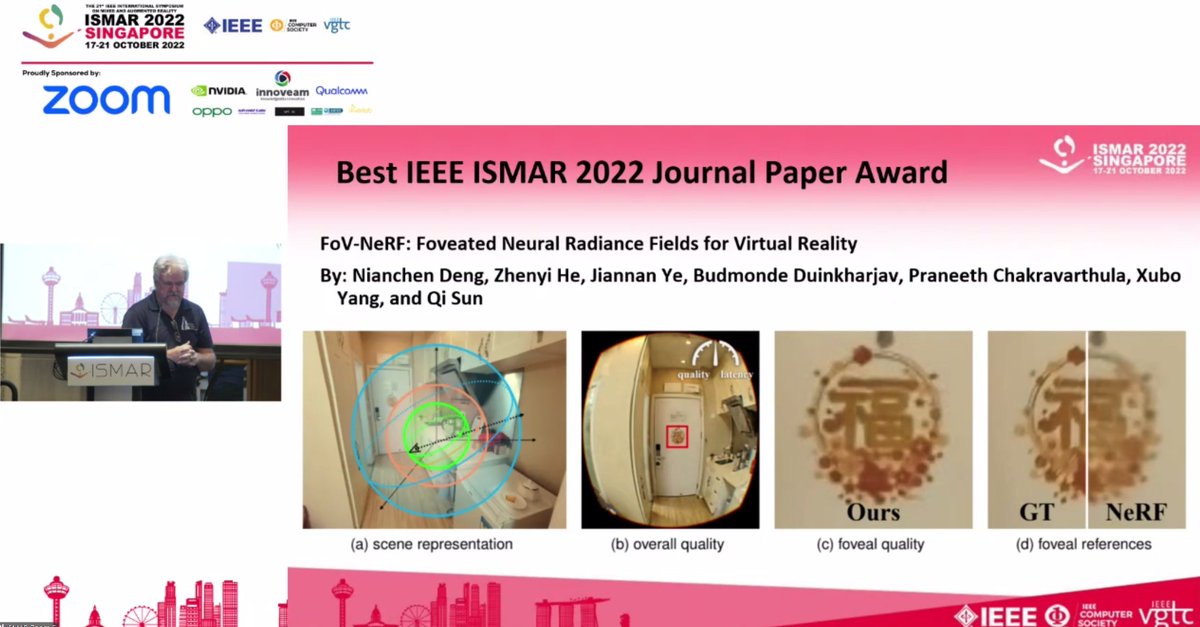

Our research received another Best Paper Award this year at #ISMAR2022 ! We introduce and leverage human visual perception to enable neural rendering #NeRF to the challenging #VR / #AR displays requiring high resolution/FPS in stereo. It is also open source! immersivecomputinglab.org/publication/fo…

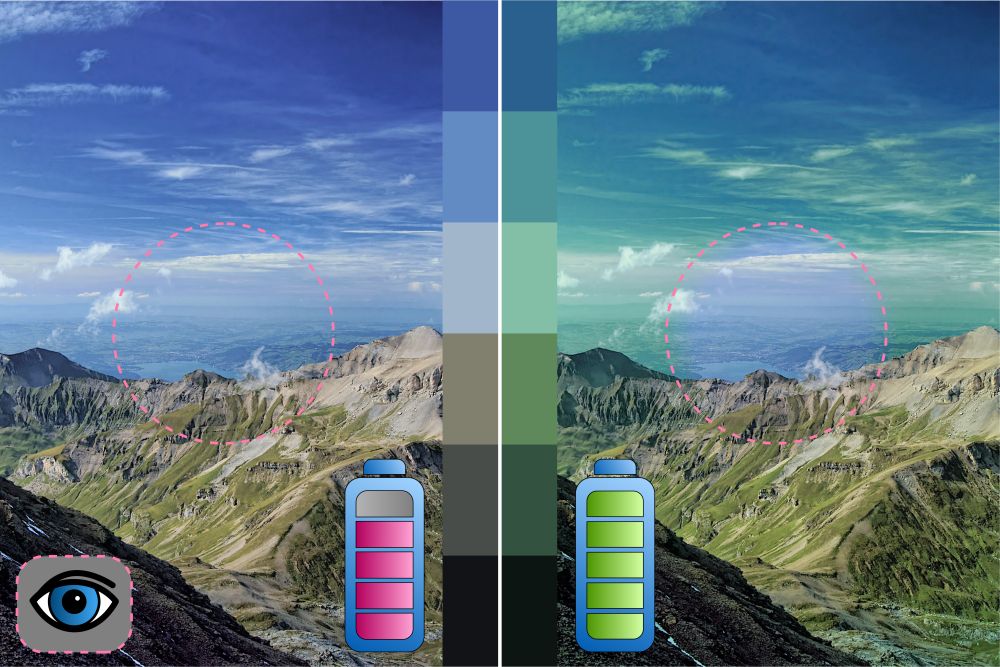

20 lines of shader can save 20% of VR display power! Check out our upcoming SIGGRAPH Asia paper on leveraging human color perception for display energy reduction. immersivecomputinglab.org/publication/co… Kudos to my students' leading

Monde Duinkharjav Kenny Chen, and a great collaboration with Yuhao Zhu

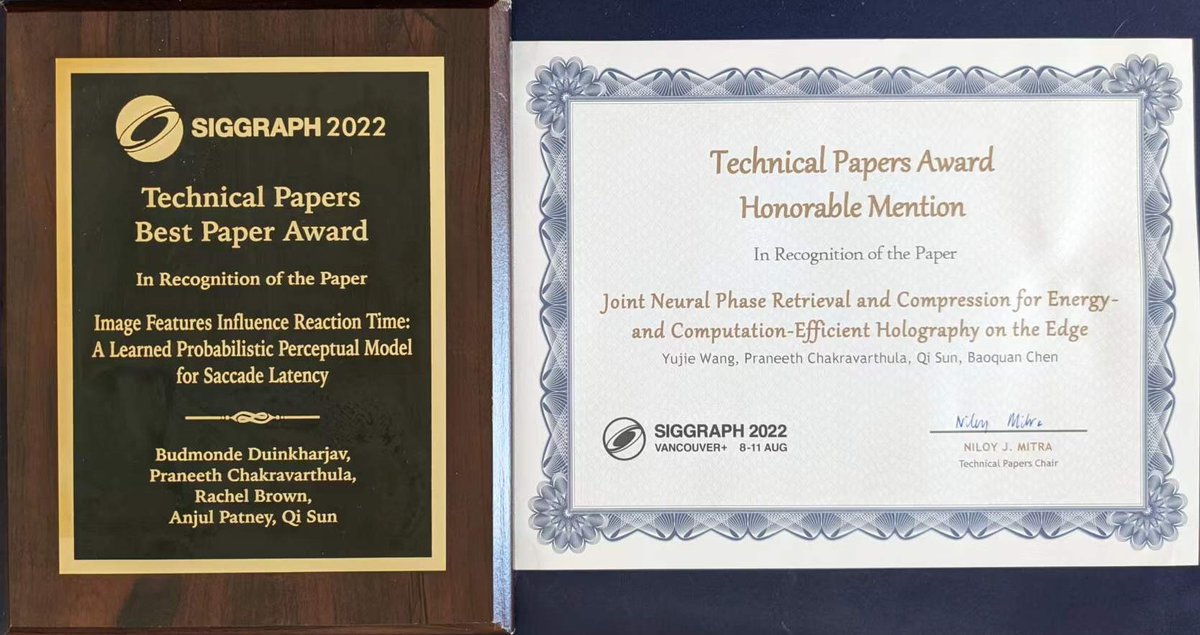

Back from #SIGGRAPH2022 with two paper recognitions. But more importantly, message me if you'd like to discuss human performance or cloud computing for VR/AR and computer graphics for future exploration!